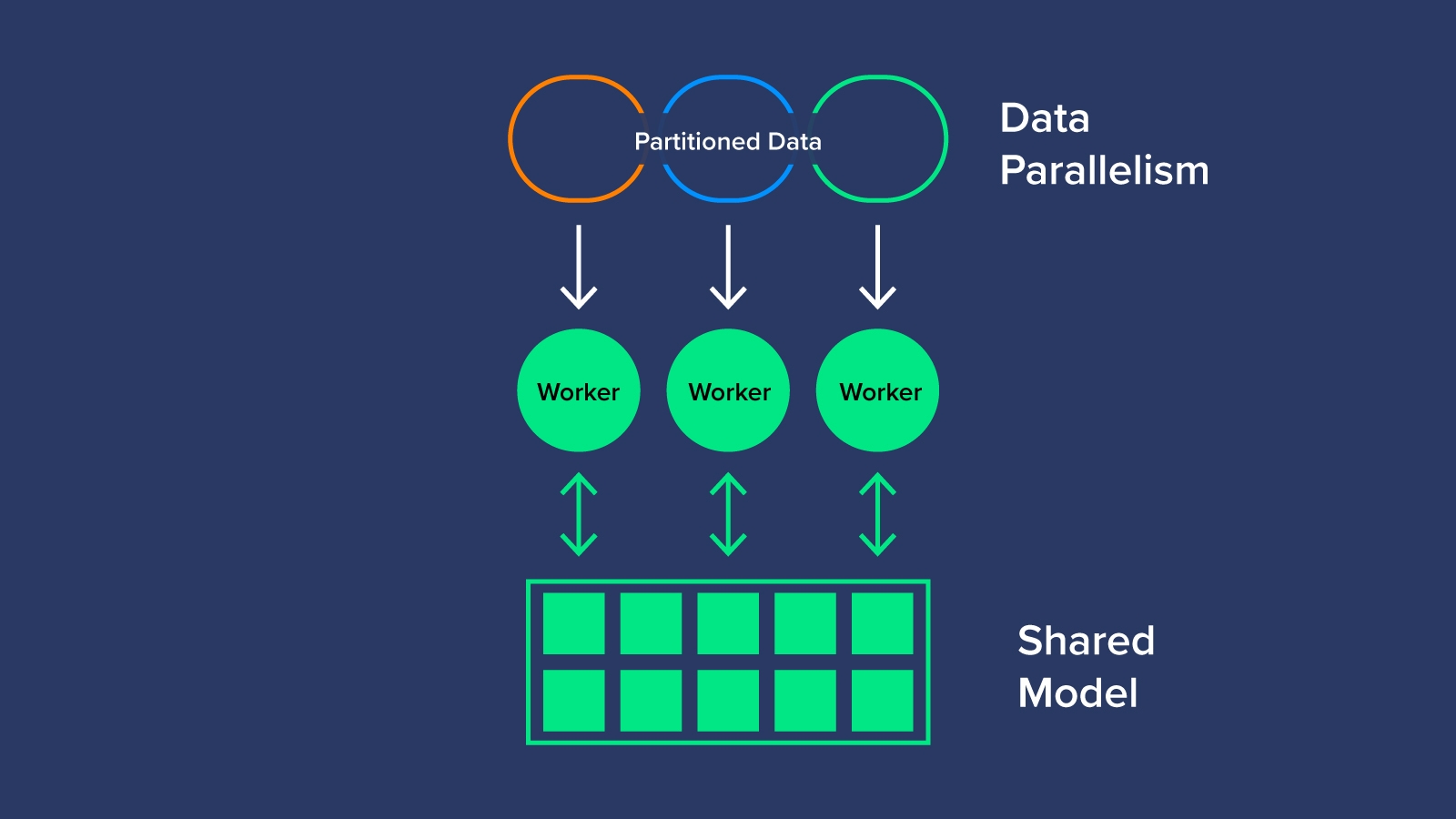

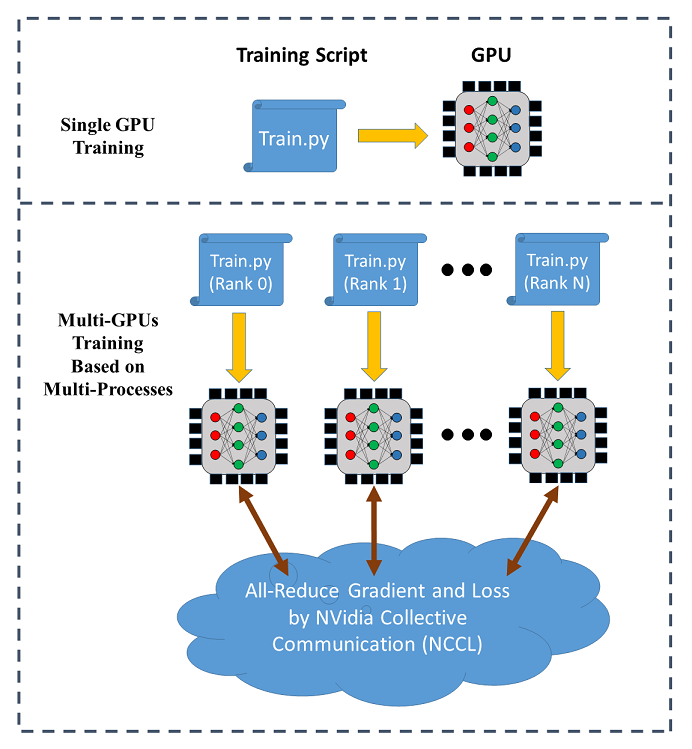

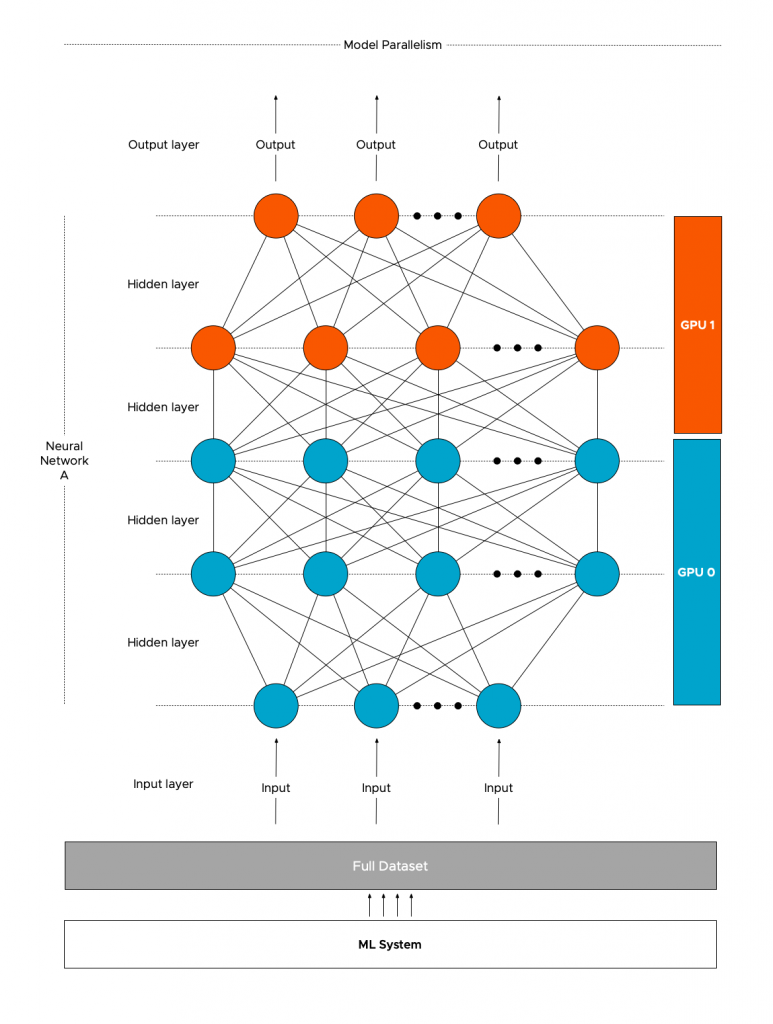

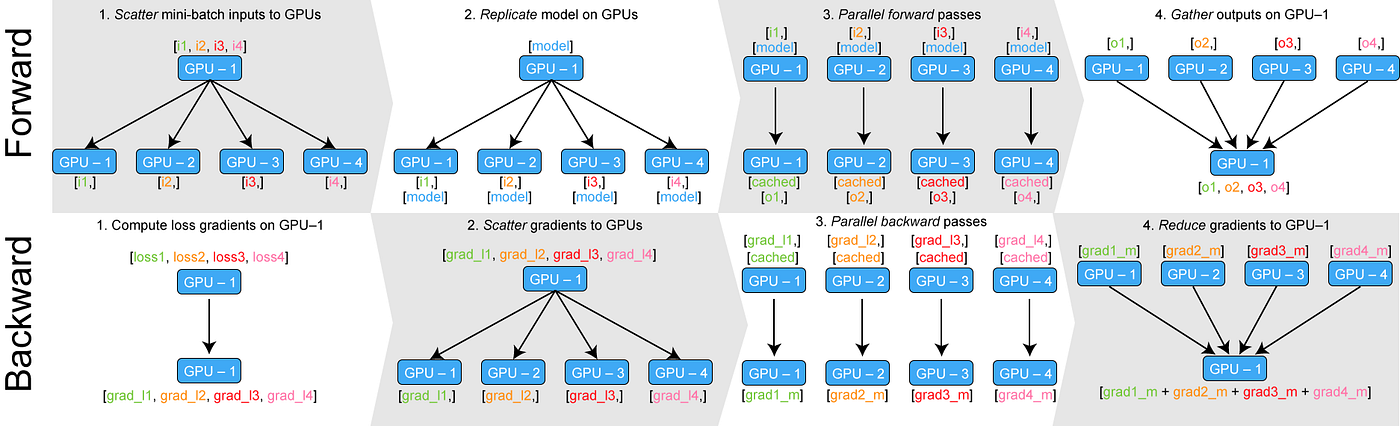

Learn PyTorch Multi-GPU properly. I'm Matthew, a carrot market machine… | by The Black Knight | Medium

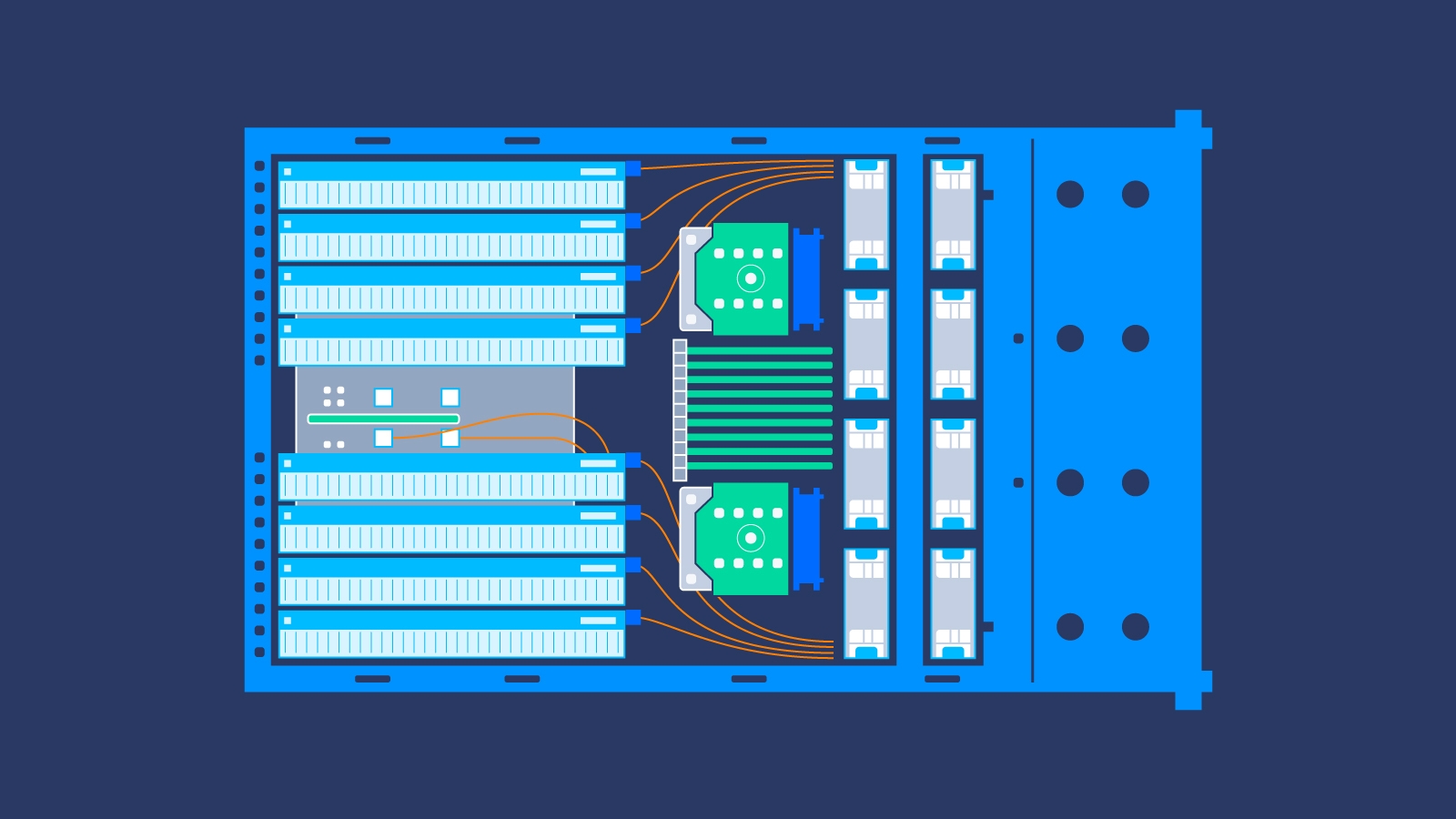

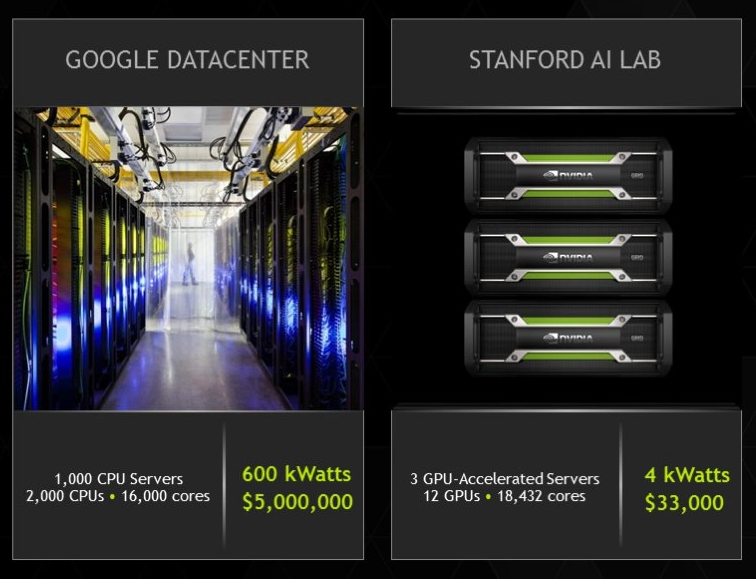

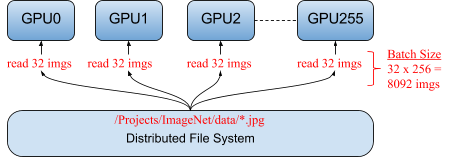

GTC Silicon Valley-2019: Accelerating Distributed Deep Learning Inference on multi-GPU with Hadoop-Spark | NVIDIA Developer

Distributed GPU Rendering on the Blockchain is The New Normal, and It's Much Cheaper Than AWS | TechPowerUp

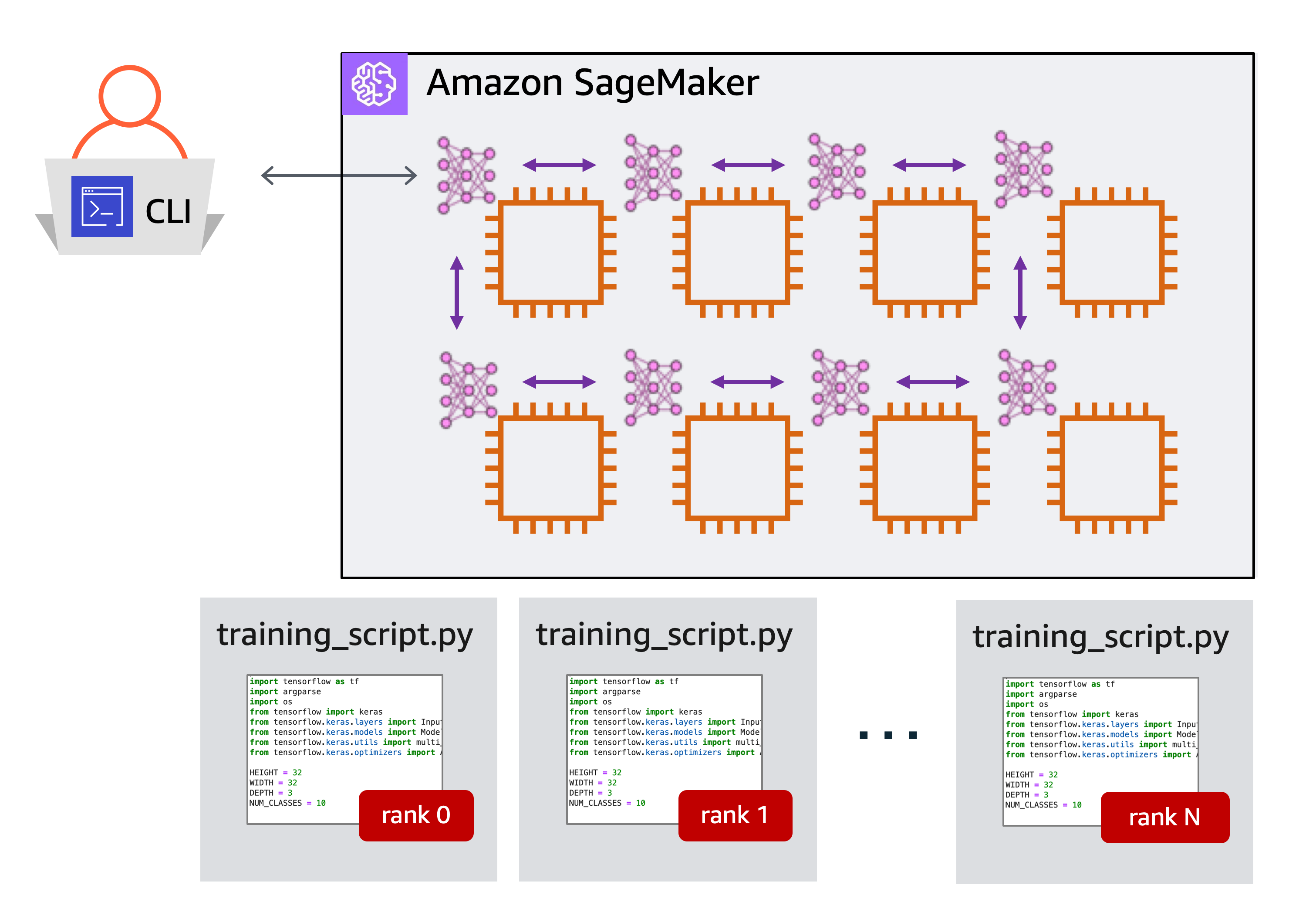

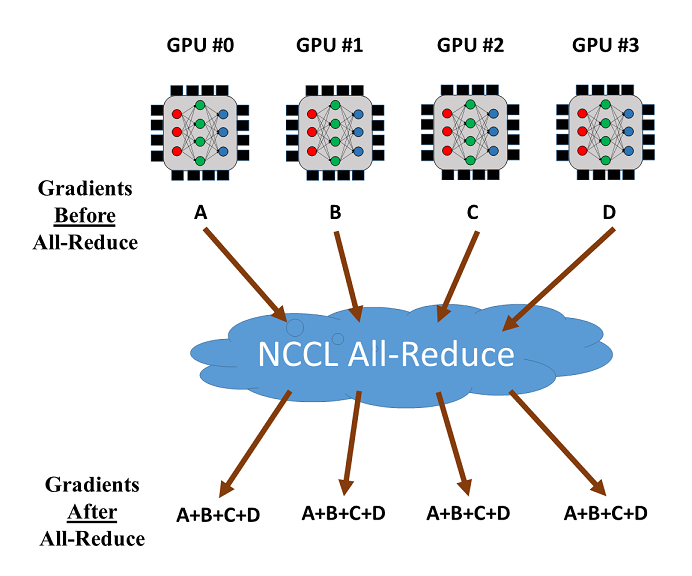

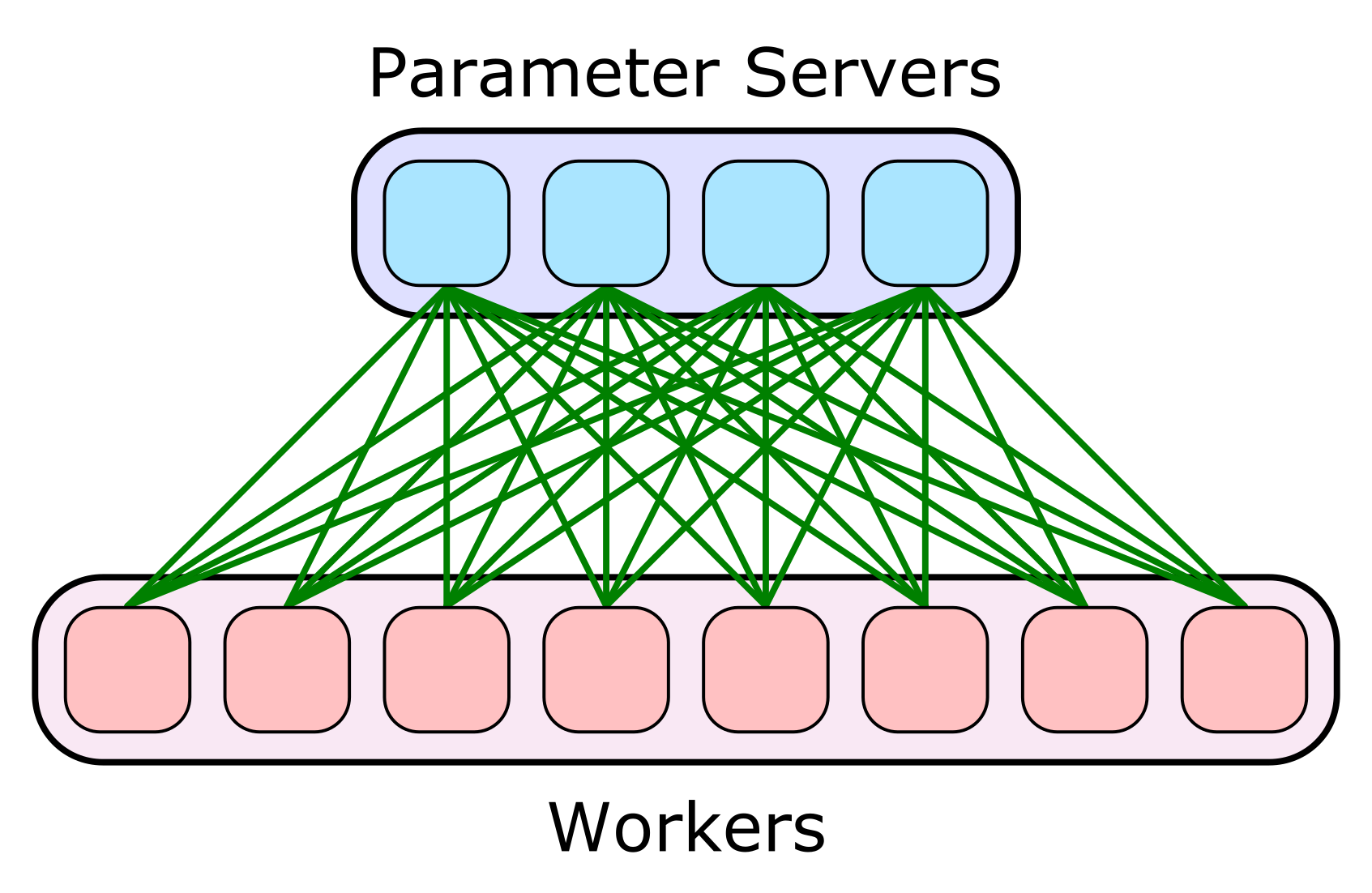

Multi-GPU and distributed training using Horovod in Amazon SageMaker Pipe mode | AWS Machine Learning Blog

Multi-GPU and distributed training using Horovod in Amazon SageMaker Pipe mode | AWS Machine Learning Blog

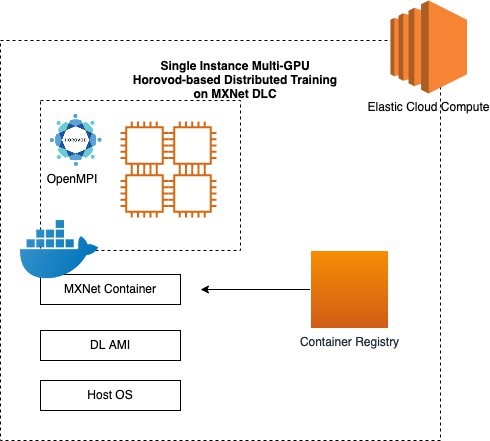

How to run distributed training using Horovod and MXNet on AWS DL Containers and AWS Deep Learning AMIs | AWS Machine Learning Blog